AI Simply Expands Who You Already Are

The Contextual Self and Its Artificial Extension

There’s a lot of hubaloo about what AI and its kissing-cousin in the form of chatbots, is doing to us, and while I have a certain reticence, as anyone does when faced with something new, about what the future holds with this new technology, I’m consistently struck by the assumptions people carry that aren’t being questioned. We still operate as if some “core” self is under threat, and that technology, in whatever form, is somehow removing us from who we are “meant to be,” through the evil machinations of capitalism, or whatever other boogeyman people have created by giving agency to labels. I can’t help but wonder when the first neanderthal figured out fire, whether there was a group even then lamenting the loss of their humanity, and gathered in their echo-caves to figure out how to get rid of this scary thing. Sure, fire had a lot of destructive capability, but it also created the glory that is medium-rare steak, so all in all, I think we came out ahead.

The Definition and Nature of Self

The question of what constitutes the self is not an abstract philosophical puzzle reserved for academics, however much I appreciate the ever-growing list of published books on the subject. It is the practical problem every person walks into a therapist’s office carrying, often without knowing it. Who am I when my relationships end? When my religion fails me? When my body changes, my career collapses, or my certainties dissolve? The urgency underneath those questions is the same in each case: there must be something here, some center that persists, and I need to find it to be authentically “me.”

The answer that psychology as a discipline, and most people, has typically defaulted to is the one manifested within dualistic religious traditions, and for individuals, this is true often regardless of whether the person is still a religious believer or not. That answer? The self is a fixed, bounded interior thing. It is a self that exists behind the eyes, a core homunculus, separable from the world around it. This picture carries with it th power of intuition and how people relate to their “lived experience,” but it is more myth than science, and it creates more problems than it solves. If the self is a static container, singular and found in some imagined “core,” then change becomes threat rather than possibility. This is undoubtedly why so many who have left religion, and many others besides, delve into personal therapy to find their “authentic self,” or lament that some ideology kept them from being “true” to who they “really are.” The idea throughout becomes a quest of restoring some pure original state, a personalized Garden of Eden, rather than discovering the flexibility that nature has provided.

A more accurate account begins with the recognition that selfhood is relational and contextual from the start. To paraphrase Daniel Siegel: the mind (or self) is better defined as a process for the regulation of energy and information within our bodies and within our relationships, an emergent process that gives rise to our mental activities such as thinking, emotion, and the memories that provide the structure of who we believe ourselves to be. This, combined with the notion of predictive processing as it relates to cognition, means that each of us is actively constructing the world through which we act and project ourselves into, based on our own idiosyncratic histories.

The self is not a thing that encounters experience from outside; it is constituted through experience, through the web of relationships, cultural inheritances, embodied sensations, and narrative frameworks we are embedded in from birth. We can use the metaphor of a loom where if someone were to pull a single thread — genetics, childhood attachment, cultural membership — at no point would you have explained the whole person. Instead, you’ve simply isolated one strand from the weave that that the autobiographical self emerges from.

This is why reducing the self to any single variable is always an epistemic failure, and why the diagnostic habit of collapsing a person’s full complexity into a label can result in real harm. What persists through the variable contexts of life is not a fixed thing but much more like an orientation or perspective: values instantiated through behavior, relationships, and commitments that constitute identity through action rather than through some form of essence.

The Theory of Extended Mind

In 1998, philosophers Andy Clark and David Chalmers published a paper that asked a deceptively simple question: where does the mind stop and the world begin? Their answer was that the boundary is far less fixed than we habitually assume. Cognitive processes are not confined to the skull. When we use a notebook to remember appointments, the notebook is doing genuine cognitive work. When we manipulate physical objects to solve a spatial problem, the environment is part of the process of creating understanding. The mind extends into the world, as we are embedded and embodied within it, through the tools, symbols, and relationships that extend our functioning.

We’ve always been tool-extended creatures. My humor, at least funny to me, at the beginning concerning the discovery of fire, is simply a beginning point example. It’s an example so integral to the picture we have of ourselves as human beings that the myth of Prometheus stands as a testament to when homo sapiens became what it is. Fire as the metaphor for knowledge, and by extension technological development, has always carried within it the duality of destruction and progress. We move forward in our creativity by projecting a critical awareness, and extending our capacity for change and development. Writing extends our memory. Clocks extend our temporal reasoning. Lakoff and Johnson’s ‘cognitive metaphor theory’ is fundamentally based on an extension of a body/mind embedded within nature. Tools do not replace cognitive functioning, they restructure the system as a whole, making new forms of thinking possible that could not have existed without it.

Clark and Chalmers remind us that the boundary between self and world is permeable, negotiated, and functionally defined. A blind person’s cane is not merely a tool they use (most especially if you’re a superhero named Daredevil); after sufficient practice, it becomes part of their perceptual system, transmitting information to them about the world. Cognitive extension is about functional expansion.

This matters for how we understand the relationship between our contextual selves and the tools we employ. The self, in its many iterations, is not compromised by extension; it is expressed through it. Values do not stay locked inside the person; they propagate outward through behavior, relationships, and the tools we choose to use. The question is never whether extension happens, but whether the means we expand ourselves carries the values and commitments we want to stay connected to, or whether it distorts and displaces them.

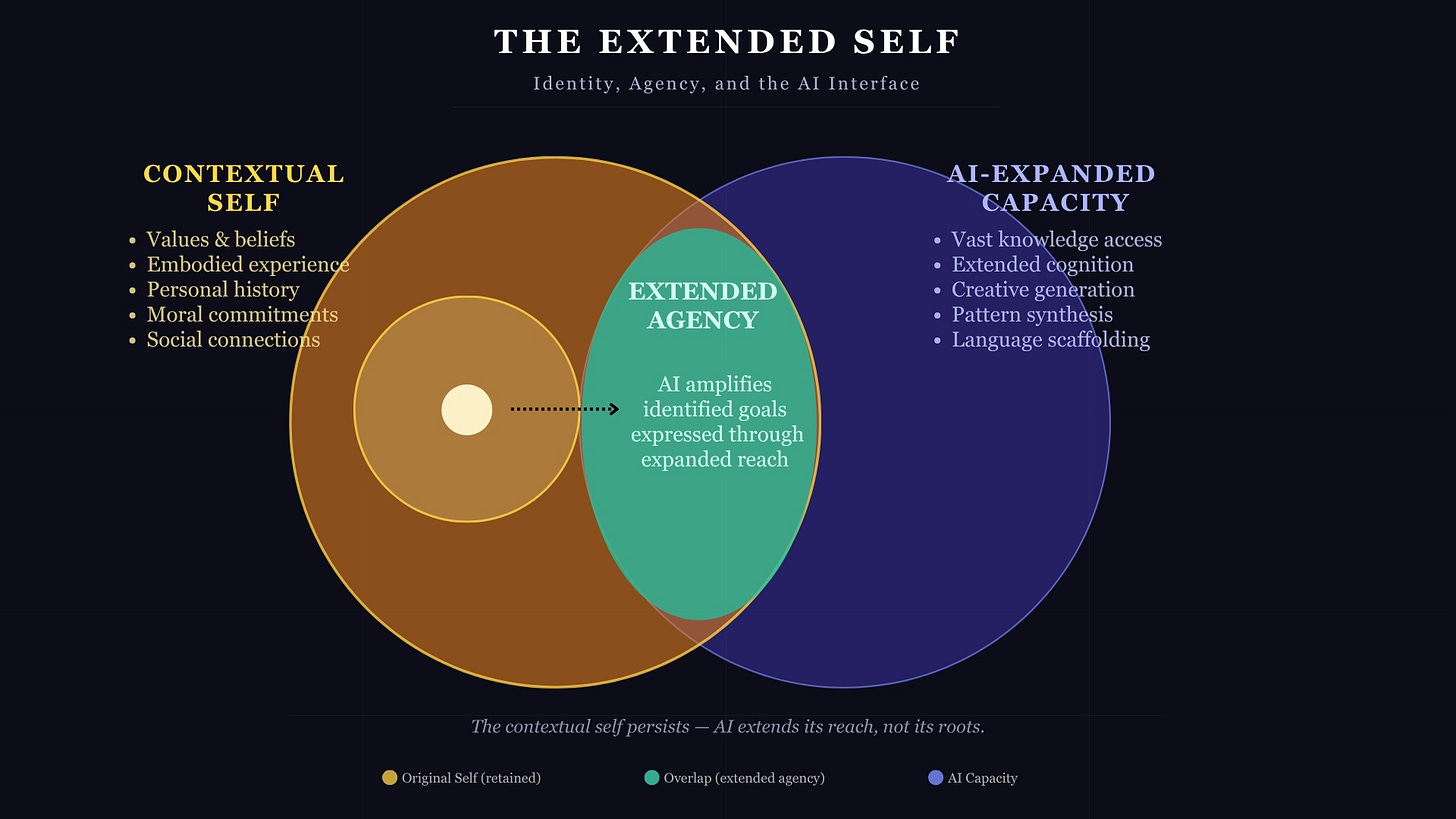

AI as a Tool for Expanding What We Already Believe

The diagram above is the argument I’m presenting. AI is simply the latest in a string of tools we’ve developed as a human species. Our AI-expanded capacity is not the most meaningful thing to focus on (though for certain we should have conversations about it). Rather, where the contextual self meets artificial capability, is where we should be more focused on, and it is the area where the assumptions about what we are and how we think have the most consequences. As with any tool, the issue is extended agency. Agency means that that who we are, our human context, remains the author. The tool serves us. It does not replace the author. Though admittedly, as with any tool, we can use it in such a way that the result is our annihilation, as it is with nuclear fission and whether abundant energy is our future or self-destruction through weaponization.

AI systems do not generate beliefs out of thin air and transfer them into users, any more than a hammer forces the person wielding it to do so in a particular way. As anyone who has smashed a finger knows, the tool doesn’t come with an automatic download of instructions.

What AI does is process, organize, synthesize, and articulate the frameworks the person using it brings. This is functionally no different than a conversation with another human being. When a person of intellectual rigor and education uses a language model to explore an idea, what comes back is, hopefully, if the “conversation” was done well, further refined. The self’s orientation and all the variables person brings to the interactive space, directs the process. The tool provides reach, not direction. This is where the ongoing expansion of detecting whether a person uses AI is getting ridiculous. Because it uses good writing principles, like the rule of three, there are people claiming that if they see it being used, the result must have been an AI, regardless of whether the person is an established author and knows what they’r doing.

The contextual self persists. AI extends our capacity, but it doesn’t replace what we’ve brought to the relational space. What can be outsourced is the labor of articulation, the breadth of pattern-matching, the synthesis of researched sources. This is useful, even as it brings with it unique difficulties associated with the tool.

The Limits That a Lack of Self-Critical Awareness Brings

Here is the uncomfortable part of AI extending ourselves: what if you’re an asshole or an idiot, or, to be more kind, varying degrees of ignorant? If the amplification depends entirely on the quality of the beliefs brought to it, there are a lot of really bad and misinformed beliefs. A tool that extends critical inquiry produces insight, at least in theory. A tool that extends the reach of unexamined assumptions, biases, and self-serving narratives feeds the expansion of ignorance to a place where it resists correction precisely because it has started sounding more intelligent.

The ‘fundamental attribution error’ is a bedrock cognitive heuristic or bias where we excuse our own bad choices using external variables while attributing the same behavior in others to fixed internal defects. We rationalize our behavior by constructing narratives that make sense within a world that, through an avoidance of discomfort, we don’t want to question. This is the normal operation of a cognitive system designed to act quickly with incomplete information. The problem is that such a system, left unexamined, generates a self-concept that is largely a manifestation of that other most basic of heuristics or biases: confirmation.

The self that lacks self-critical awareness does not experience itself as distorted. It experiences itself as simply seeing things clearly. Our values feel obvious rather than chosen, or contextually provided, and our blind spots are by definition invisible. This is the psychological condition in which any tool use, though it would seem particulatly that of AI, perhaps because it mimics agency so much better than a hammer ever will, becomes genuinely dangerous. Cognitive bias scaled up by a large language model does not become less biased; it becomes more persuasive.

There is no built-in process for criticism like there is in scientific disciplines. Self-criticism is possible, but it is a constant choice to engage in, and given our predilection to avoid discomfort, it’s a choice that almost never feels good to pursue. The questioning, the deconstruction of received frameworks, the honest reckoning with one’s motivated reasoning. Without that work, what gets extended by using AI is the illusion of reflection, when truly they are thinking in circles, but more efficiently.

In using AI, we should be cultivating uncertainty as a cognitive virtue rather than a defect to be resolved. We should approach our values and beliefs with the same exploratory spirit one would want to bring to any serious inquiry. This means, to use a frequent phrase in the land of therapy, doing the work, where technological tools serve discovery rather than merely accelerate the defense of what was already assumed.

Let’s get back to the loom (and incidentally why I call my therapeutic and coaching business “Life Weavings”). Each life is a complex interplay of many threads, and to pluck just one will never give you the full picture. The same is true of using AI. The full picture, or at least a fuller one, requires the willingness to look at the whole weave, to notice which threads have been avoided, which ones are tangled, and which ones you have been quietly refusing to examine.

That willingness will not be provided by AI (at least not yet). It is the profound expressive contribution of a self that has chosen, through sustained effort and honest questioning, to remain in relationship with its own uncertainty. To find that a sense of wonder means wading through the discomfort of what isn’t known.

Follow me on Threads or Instagram for more psychology, humor, and photography.

Schedule a Consultation

Reach out to schedule a mental health session regarding issues related to religious trauma, meaning/purpose, and the struggles of communication.

How You Can Support the Newsletter

This post was free to read for all. If you like what I’m doing with The Humanity’s Values Newsletter, and want to support my work, there are several ways you can do it.

Like and Restack: Click the buttons at the top or bottom of the page to boost the post’s visibility on Substack.

Share: Send the post to friends or share it on social media.

Upgrade to Paid: A paid subscription gets you:

Full access to all new posts and the archive

Full access to all online classes on psychology and religious trauma

Full access to exclusive content such as weekly presentations on various topics of psychology and religion

The ability to post comments and engage in chat with the growing Humanity’s Values Newsletter community

If you could do any of the above, I’d be very grateful. Readers like you help keep this newsletter going and growing.

Thanks!

David